A Data-Cleaning Case Study From Blackhole DEX

In this article, I want to walk through a real data issue we ran into at lpscanner.xyz: how we discovered it, how we analyzed it, and how we solved it.

Some parts of this article are written in italics. Those sections are more technical and mostly include SQL queries or database details. If you are not comfortable with SQL, you can safely skip them and still follow the main story.

Discovering the Problem

At lpscanner.xyz, we process concentrated liquidity pool data with a pipeline built around several event tables. For Blackhole DEX, the main tables are:

After fetching raw data from Avalanche C-Chain, we transform it into this structure and begin processing it.

pool_creations — one row per pool: chain, protocol, pool_address, token0/token1, fee, tickSpacing, initial tick and sqrtPriceX96, block metadata.

fee_changes — Blackhole pools can change their fee dynamically. Each fee change event is one row: pool_address, new fee value, block metadata.

liquidity_movements — every Mint/Burn: pool_address, salt (position ID), owner, tick range (tickLower, tickUpper), liquidityDelta, token amounts, block metadata.

swaps — every swap: sender, recipient, token amounts, sqrtPriceX96, liquidity at ending tick, tick, block metadata.

Our pipeline works like this:

First, for each pool, we reconstruct the initial liquidity state using liquidity movements from before the investigation window. In our current setup, that window is the last 30 days. That gives us a starting snapshot of the pool, which looks roughly like this:

tickBoundary → liquidityAmount [60, 120) → 10,910e6 ...

Then we merge all relevant events from the last 30 days into one ordered timeline: fee changes, liquidity movements, and swaps. We process them in on-chain execution order.

If the event is a liquidity movement, we update the liquidity state. If it is a fee change, we apply the new fee to upcoming swaps. If it is a swap, we identify which ticks it crossed so we can distribute volume and fees correctly across tick ranges and LP positions.

For heavy pools, this can already be expensive. Some pools have around 500,000 events, and roughly 20% of them are liquidity movements. Processing a pool like that takes about 1 hour in our current setup.

The Pool That Broke the Pipeline

About 10 days ago, we started processing Blackhole DEX pools and got stuck on this pool:

0xa02ec3ba8d17887567672b2cdcaf525534636ea0

CL1-WAVAX/USDC

At first, it looked like a normal "heavy pool" problem. But when we checked the numbers, something was clearly off:

That ratio did not make sense. Even with an active LP bot, you would expect liquidity management to be much more efficient. For example, if a bot adds liquidity before swaps and removes it afterward, you might expect something closer to 2× liquidity movements per swap — not nearly 9×.

Analyzing the Anomaly

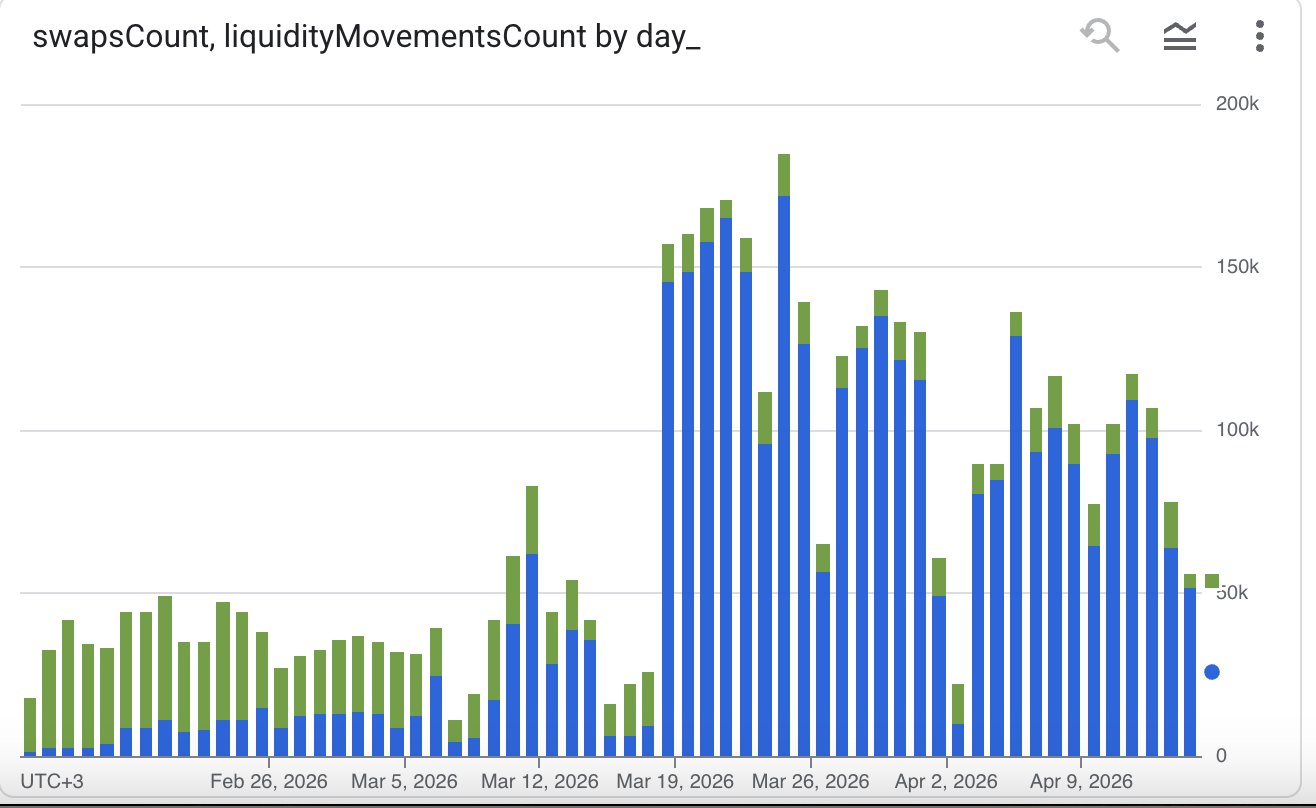

We graphed swap counts and liquidity movement counts for the pool over the last 60 days. Blue bars represent liquidity movements, green bars represent swaps.

The chart showed a very clear pattern: after March 18, liquidity movements jumped sharply while swaps did not rise in the same way. That immediately suggested that the issue was not organic trading activity — something else was generating a huge amount of liquidity events.

The graph above was created from the output of this query:

WITH

swaps_counted AS (

SELECT

TIMESTAMP_TRUNC(block_timestamp, DAY) AS day_

, COUNT(*) AS swapsCount

FROM `lpscanner.avalanche_blackhole_swaps`

WHERE block_timestamp > CURRENT_TIMESTAMP - INTERVAL 60 DAY

AND pool_address = '0xa02ec3ba8d17887567672b2cdcaf525534636ea0'

GROUP BY 1

)

, liquidity_movements_counted AS (

SELECT

TIMESTAMP_TRUNC(block_timestamp, DAY) AS day_

, COUNT(*) AS liquidityMovementsCount

FROM `lpscanner.avalanche_blackhole_liquidity_movements`

WHERE block_timestamp > CURRENT_TIMESTAMP - INTERVAL 60 DAY

AND pool_address = '0xa02ec3ba8d17887567672b2cdcaf525534636ea0'

GROUP BY 1

)

SELECT

sc.day_

, sc.swapsCount

, lmc.liquidityMovementsCount

FROM swaps_counted sc

LEFT JOIN liquidity_movements_counted lmc ON sc.day_ = lmc.day_

So we looked deeper.

We built a query to identify what we called unutilized liquidity movements: liquidity movements from positions that were opened and closed without being active for even a single swap.

To do that, we:

- merged swaps and liquidity movements into one unified timeline,

- tracked the latest swap seen before each event,

- reconstructed each position's liquidity cycle,

- and labeled a cycle as unutilized if the position opened and closed before any swap happened after its opening.

We use generatedIndex = block_number × 1,000,000 + log_index to preserve exact on-chain event ordering across merged event types.

What We Found

Out of 2,959,785 liquidity movements, 2,719,998 were labeled as unutilized. The vast majority of those liquidity events came from positions that were never active for even one swap — our processor was spending most of its time handling liquidity that had zero impact on fee distribution or executed trading volume.

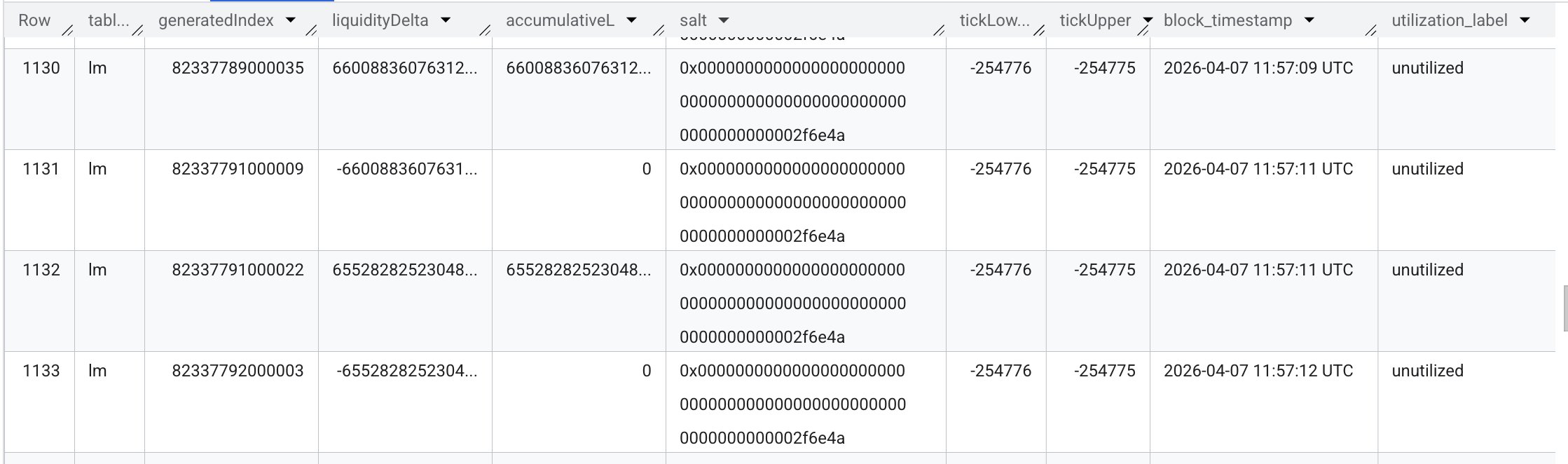

Before the query — here is a sample of its output. Some positions continuously add and remove liquidity without any swap event between them:

Here is the query that produces this table:

WITH

-- Positions active before the 30-day window that contribute to the initial liquidity state

active_positions_feed_before_the_term AS (

SELECT generatedIndex, liquidityDelta, ownerAddress, salt, tickLower, tickUpper, block_timestamp

FROM (

SELECT

SUM(liquidityDelta) OVER(PARTITION BY ownerAddress, salt, tickLower, tickUpper) AS cumulativeLiquidityByPosition

, generatedIndex, liquidityDelta, ownerAddress, salt, tickLower, tickUpper, block_timestamp

FROM lpscanner.avalanche_blackhole_liquidity_movements

WHERE pool_address = '0xa02ec3ba8d17887567672b2cdcaf525534636ea0'

AND generatedIndex < 80439933000022 -- limit of investigation period (block_number * 1e6 + log_index)

AND block_timestamp < CURRENT_TIMESTAMP() - INTERVAL 29 DAY -- partition pruning

)

WHERE cumulativeLiquidityByPosition > 0 -- only positions that were still active before the window

)

, the_list AS (

SELECT * FROM (

SELECT

'lm' AS table_

, generatedIndex, liquidityDelta

, SUM(liquidityDelta) OVER(

PARTITION BY salt, tickLower, tickUpper

ORDER BY generatedIndex

) AS accumulativeL -- cumulative liquidity for this position at this point in time

, salt, tickLower, tickUpper, block_timestamp

FROM (

SELECT generatedIndex, liquidityDelta, ownerAddress, salt, tickLower, tickUpper, block_timestamp

FROM lpscanner.avalanche_blackhole_liquidity_movements

WHERE pool_address = '0xa02ec3ba8d17887567672b2cdcaf525534636ea0'

AND generatedIndex >= 80439933000022

AND block_timestamp > CURRENT_TIMESTAMP() - INTERVAL 31 DAY

AND liquidityDelta != 0 -- liquidityDelta = 0 means fee-collection only, skip

UNION ALL

SELECT * FROM active_positions_feed_before_the_term

)

UNION ALL

-- Merge swaps into the unified timeline (NULL for lm-only columns)

SELECT

'swa' AS table_

, generatedIndex

, NULL AS liquidityDelta

, NULL AS accumulativeL

, NULL AS salt, NULL AS tickLower, NULL AS tickUpper

, block_timestamp

FROM lpscanner.avalanche_blackhole_swaps

WHERE pool_address = '0xa02ec3ba8d17887567672b2cdcaf525534636ea0'

AND generatedIndex >= 80439933000022

AND block_timestamp > CURRENT_TIMESTAMP() - INTERVAL 31 DAY

)

)

-- Attach the generatedIndex of the most recent swap seen so far to every event

, with_last_swap AS (

SELECT *,

MAX(IF(table_ = 'swa', generatedIndex, NULL))

OVER (ORDER BY generatedIndex ROWS BETWEEN UNBOUNDED PRECEDING AND CURRENT ROW)

AS last_swap_gi

FROM the_list

)

, lm_events AS (

SELECT *,

LAG(accumulativeL, 1, 0)

OVER (PARTITION BY salt, tickLower, tickUpper ORDER BY generatedIndex)

AS prev_accumulativeL -- previous accumulated liquidity for this position

FROM with_last_swap

WHERE table_ = 'lm'

)

-- Carry forward the generatedIndex of the most recent open event per position.

-- An "open" is: prev_accumulativeL = 0 AND accumulativeL > 0.

, lm_with_cycle AS (

SELECT *,

LAST_VALUE(

IF(prev_accumulativeL = 0 AND accumulativeL > 0, generatedIndex, NULL)

IGNORE NULLS

) OVER (

PARTITION BY salt, tickLower, tickUpper

ORDER BY generatedIndex

ROWS BETWEEN UNBOUNDED PRECEDING AND CURRENT ROW

) AS cycle_open_gi

FROM lm_events

)

-- A cycle is unutilized if it closed before any swap occurred after its open

, unutilized_cycles AS (

SELECT DISTINCT salt, tickLower, tickUpper, cycle_open_gi

FROM lm_with_cycle

WHERE accumulativeL = 0

AND cycle_open_gi IS NOT NULL

AND cycle_open_gi >= 80439933000022

AND COALESCE(last_swap_gi, 0) < cycle_open_gi

)

-- Label every lm event — 'unutilized' if its cycle matches, 'utilized' otherwise

, lm_labeled AS (

SELECT l.*,

IF(u.cycle_open_gi IS NOT NULL, 'unutilized', 'utilized') AS utilization_label

FROM lm_with_cycle l

LEFT JOIN unutilized_cycles u USING (salt, tickLower, tickUpper, cycle_open_gi)

)

-- Final output: labeled lm events merged back with swap events

SELECT

table_, generatedIndex, liquidityDelta, accumulativeL,

salt, tickLower, tickUpper, block_timestamp,

utilization_label, last_swap_gi

FROM lm_labeled

UNION ALL

SELECT

table_, generatedIndex, NULL, NULL,

NULL, NULL, NULL, block_timestamp,

NULL AS utilization_label, last_swap_gi

FROM with_last_swap

WHERE table_ = 'swa'

ORDER BY generatedIndex

Solving the Problem

Once we confirmed that, the fix was simple: we dropped the matching unutilized liquidity movement rows from the last 30 days before running the heavy processing step.

After that cleaning step, we were able to process the pool successfully without overwhelming RAM or CPU resources.

Why This Matters

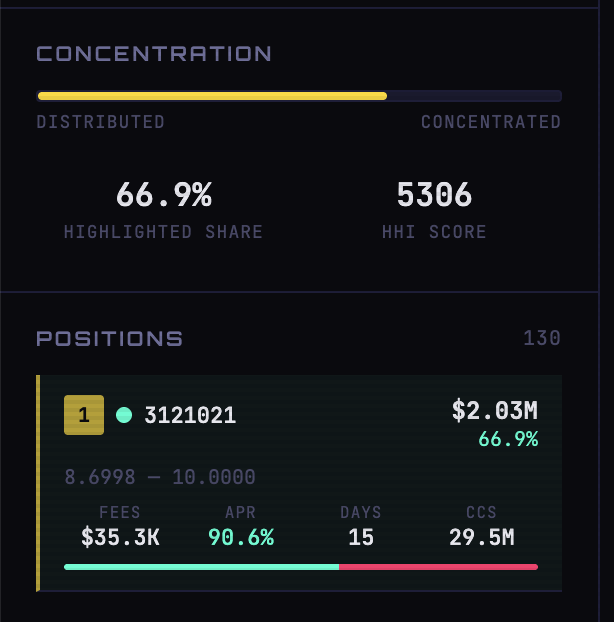

After cleaning the data, we successfully processed the pool and published its page on lpscanner: lpscanner.xyz → avalanche / blackhole / 0xa02ec3ba

One notable detail: the largest position in this pool holds 66.9% of total liquidity, and over the last 15 days it earned about $35.3K in swap fees.

But the investigation also raised a bigger question.

A large share of these suspicious liquidity movements appears to come from this address:

Contract: 0x07880d914bad719175099a362a88606eb975ffdc

Created ~20 days ago. Generally called by:

0xebd55519e589f6daa0a5284942266b0badf81e91

0x59630d884572199c5f7602cfa36005cef5d68787

That becomes especially interesting in the context of Retro9000 Round 2.

Effect on Retro9000?

At the time of writing, Blackhole DEX is leading the Retro9000 scoreboard with roughly ~1,040 AVAX burned across registered contracts. The pool we investigated is only 1 of 63 registered contracts.

And just from the never-utilized liquidity movements tied to this one contract, roughly 407 AVAX appears to have been burned.

Here is a simple query that anyone can run on a free Dune account and use as a starting point for their investigation:

SELECT

*

, SUM(total_gas_cost_avax) OVER (ORDER BY day_)

FROM (

SELECT

DATE_TRUNC('day', block_date) AS day_

, "from"

, COUNT(*) AS transaction_count

, SUM(CAST(gas_price * gas_used AS DECIMAL)) / POW(10, 18) AS total_gas_cost_avax

FROM avalanche_c.transactions

WHERE "to" = 0x07880d914bad719175099a362a88606eb975ffdc

GROUP BY 1, 2

)

Query and full output: dune.com/queries/7020324/10941117

That does not prove intent on its own. But it does create a legitimate question: were these transactions meaningful market-making activity, irrelevant external contract activity, or an attempt to farm Retro9000 through artificial on-chain load?

That part is still open.